Random defects respond to cleanliness and particle control programs. Systematic defects require process adjustment. Treating them the same way wastes engineering time: you can run an aggressive cleaning program and reduce particle counts to near-zero, and the yield problem will persist unchanged if the defects were systematic to begin with. Getting this classification right at the start of a yield improvement effort is the highest-leverage decision in the process.

What "Random" and "Systematic" Actually Mean

In yield modeling, "random" defects are those with no spatial correlation — they are distributed across the wafer surface according to a Poisson or negative binomial process, with equal probability of occurring at any location. They originate from particle contamination events: particles in the process gas, on equipment surfaces, shed by robot handling components, or generated by equipment degradation. They are random in the sense that their positions are not determined by any systematic property of the process — they land wherever they land by chance.

Systematic defects have a predictable spatial relationship to the process or the design. They occur at specific locations — consistently at specific wafer zones (edge, center, specific angular positions), consistently at specific layout features (dense arrays versus isolated lines, line-end versus body, specific CDs), or consistently correlated with specific equipment states (chamber condition, process recipe parameters). Their positions are not random; they reflect a deterministic process effect.

The Yield Model Difference

Random defects at low to moderate density are handled well by the negative binomial yield model, where yield = (1 + D0 * A / alpha)^(-alpha). D0 is defect density, A is the critical area, and alpha is the clustering parameter. Improvements to random yield require either reducing D0 (fewer particles per unit area) or reducing A (smaller critical area through design optimization). Both levers are well-understood and connect directly to measurable metrics.

Systematic defects do not fit the negative binomial model well. A systematic defect that kills every die in a specific layout zone — for example, every die at the wafer edge, or every die containing a specific critical feature — is not random. Its yield impact cannot be reduced by cleaning programs. The appropriate yield model for systematic defects is a fault model that accounts for the spatial coverage of the defect mechanism relative to the die layout.

Misapplying the negative binomial model to systematic yield loss produces a systematic overestimate of D0 — the model interprets the systematic loss as a high-density random defect background — which leads to cleaning programs being prescribed when process adjustment is what is needed. This is not a theoretical concern; it is a common analytical error in fabs where yield models are fit to probe data without distinguishing defect types.

Statistical Tests for Distinguishing the Two

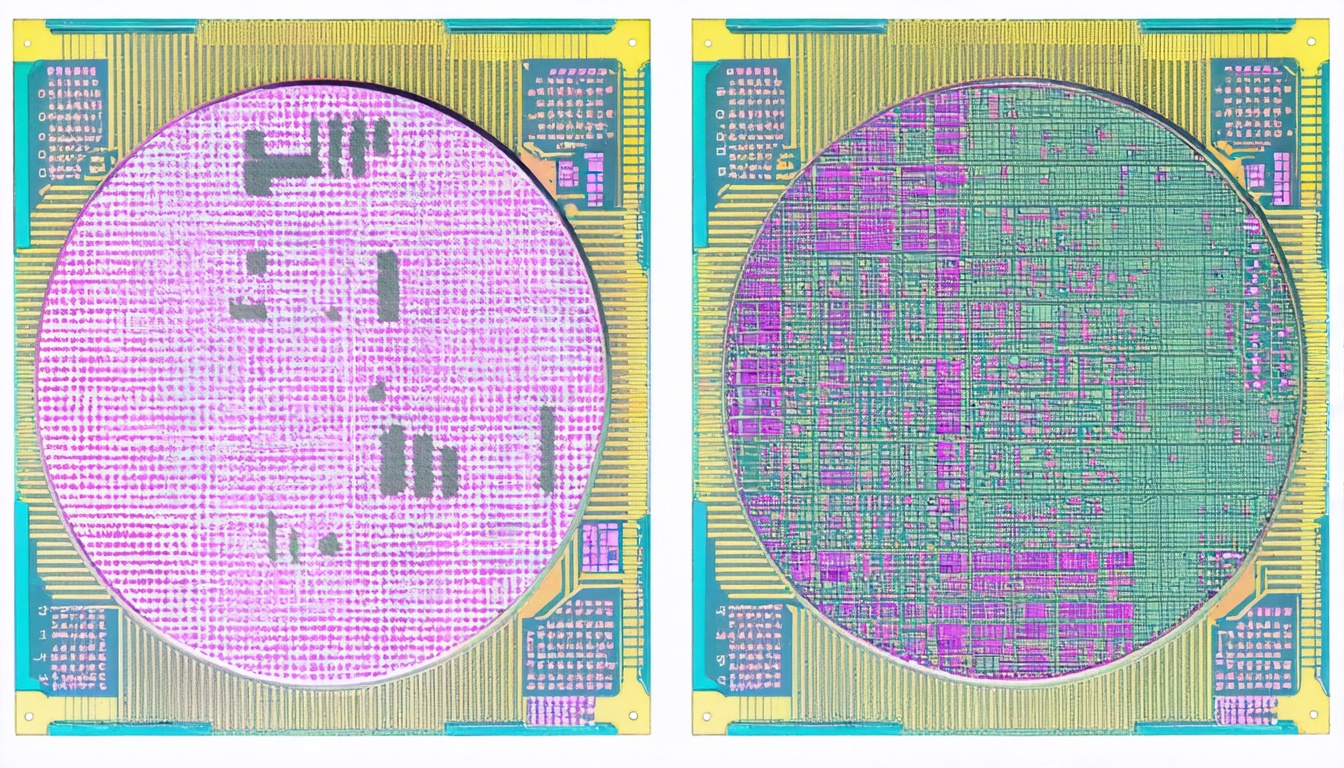

Several statistical tests help distinguish random from systematic yield contributions in wafer map data. The most direct is spatial autocorrelation: a wafer map with random defect distribution has near-zero spatial autocorrelation (defect presence at one location does not predict defect presence at nearby locations). A wafer map with systematic defects shows positive spatial autocorrelation — defects cluster in predictable zones.

Moran's I statistic quantifies spatial autocorrelation with a range from -1 (perfect dispersion) to +1 (perfect clustering). For typical production wafer maps, a Moran's I above 0.3 is a strong indicator of systematic defect contribution. The specific zone of clustering (edge, center, angular pattern) then points to the responsible process mechanism.

A complementary test is wafer-to-wafer position consistency: if the same die positions consistently show elevated fail rates across multiple wafers from the same lot, or across multiple lots processed on the same tool, the defect source is systematic. If the failing die positions change randomly wafer-to-wafer, the source is random. This test requires having probe yield maps with die-level resolution aligned to inspection defect coordinates — a data connection that requires the spatial correlation infrastructure described elsewhere in this blog.

Cluster Analysis for Mixed Populations

Most wafers have a mixture of random and systematic defects. The random background is always present at some density; the systematic contribution appears on top of it. Separating the two requires decomposing the wafer map into components. A standard approach is to fit a spatial smoothing function to the wafer map and identify the locally elevated zones as systematic contributors; the remaining residual, after removing the systematic spatial component, represents the random background.

The decomposition is imperfect — small systematic contributions can be buried in the random background noise, and high random density can mask the spatial structure of a systematic contribution. Averaging across multiple wafers from the same process step (at the same process step and same equipment ID) improves separation by reinforcing the consistent spatial pattern of systematic defects while averaging out the random variation. Twenty to thirty wafers is typically enough for the spatial pattern to emerge clearly above the random noise for systematic contributions with 20%+ above-background density elevation.

Response Strategies by Defect Type

For confirmed random defects, the response strategy focuses on source reduction. Particle sizing from the inspection data (the size distribution of detected particles) often identifies the generation mechanism — large particles from mechanical contact, mid-size particles from CVD flaking, small particles from plasma-generated contamination. Once the size distribution points to a source category, the engineering response is specific: bevel cleaning, chamber seasoning, filter replacement, or robot end-effector replacement. Blanket cleaning programs that address all contamination sources simultaneously are less efficient but appropriate when the source is not yet identified.

For confirmed systematic defects, the response strategy requires identifying the process parameter driving the systematic signature. If the spatial pattern matches a known process non-uniformity (edge-high etch rate, center-high deposition), the response is process recipe adjustment. If the pattern matches a design sensitivity (specific layout features consistently failing), the response involves working with design engineers to add design rules or layout optimizations that reduce sensitivity. If the pattern matches equipment state (specific chamber, specific time period), the response is equipment maintenance or replacement.

The Cost of Misclassification

The operational cost of misclassifying a systematic defect as random is a sequence of cleaning and contamination control interventions that produce no yield improvement. In a production environment, each such intervention takes engineering time to plan and execute. Repeated non-productive interventions are expensive — typically $20,000 to $100,000 in engineering and downtime cost per major cleaning event — and they erode confidence in the yield engineering team's analytical capability.

The cost of misclassifying a random defect as systematic is less severe but still significant: process recipe changes made to address a perceived systematic pattern can destabilize a process window that was previously stable, potentially introducing new yield problems while the original random-defect issue persists. The first question to ask at the start of any yield improvement effort — before any response action is planned — is which category the defects belong to. The rest of the analysis follows from that answer.

How SynthKernel Automates the Classification

SynthKernel computes spatial autocorrelation and clustering statistics on every wafer map as part of standard defect ingestion processing. Wafer maps with Moran's I above a configurable threshold are automatically flagged as having systematic defect content, and the spatial pattern is extracted and matched to the archetype library. The output is a per-inspection-event classification: random-dominant, systematic-dominant, or mixed, with the spatial pattern descriptor attached to the systematic component. That classification is available to the root cause ranking engine and to the alert routing logic, so that systematic-classified events route to process engineering and random-classified events route to contamination control — before any human review is required.