An accuracy number without context tells you nothing useful. 97.3% sounds good. Whether it is good enough — for your node, your defect mix, your review sampling rate — requires understanding where that number comes from, what it covers, and what the 2.7% gets wrong. This post is that context.

How the 97.3% Number Was Measured

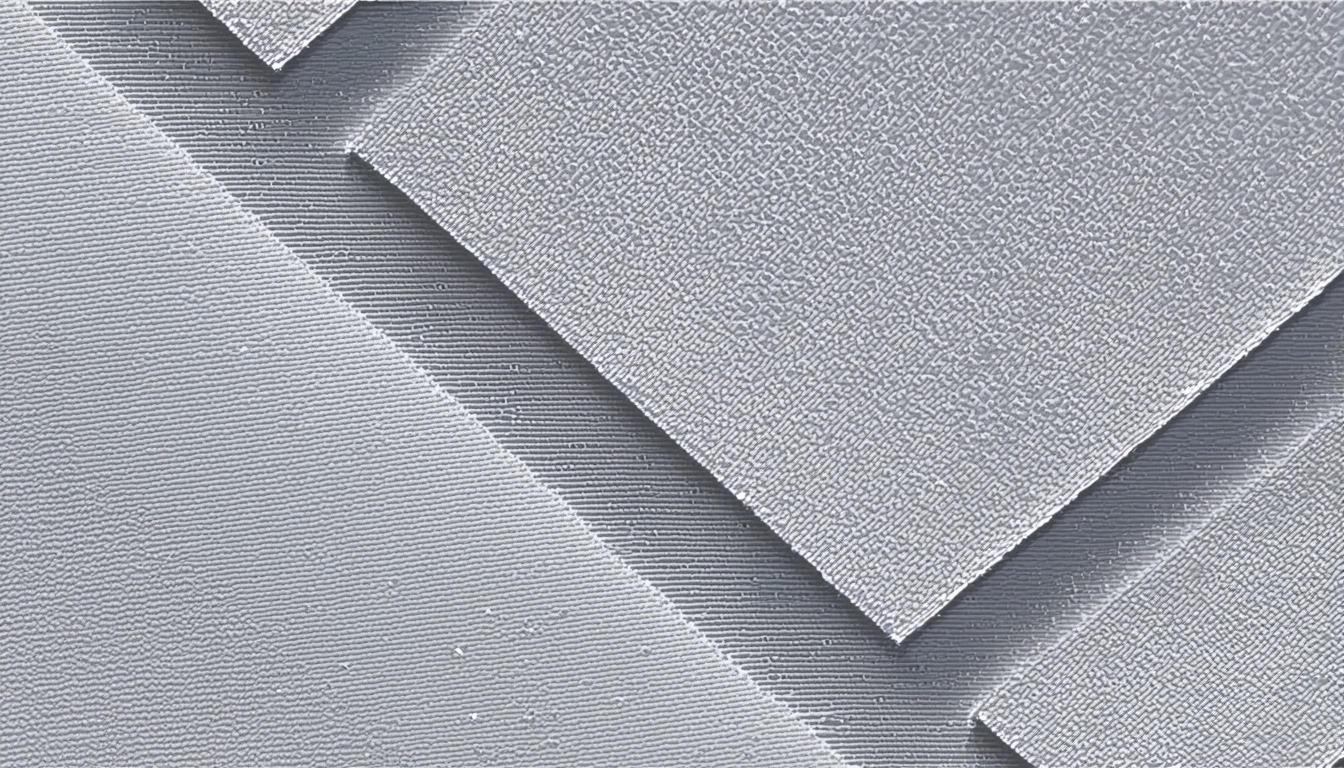

The 97.3% figure is top-1 classification accuracy on a held-out test set of 84,000 CDSEM review images drawn from 14nm, 10nm, and 7nm logic process flows. The images were collected from three different fab sites using KLA eDR-7000, eDR-5200, and ASML HMI eScan400 review SEMs. The test set was not used during training or hyperparameter tuning.

The classification task covers eight defect type categories: bridging, particle (organic), particle (metal), CD deviation, pattern collapse, scratch, edge roughness, and unknown/nuisance. These eight categories represent approximately 91% of the defect types encountered at the nodes we target. The remaining 9% are rare defect types — including certain epitaxial growth defects and some node-specific patterning artifacts — that appear infrequently enough that we do not have sufficient labeled examples to train reliable classifiers for them.

What Gets Misclassified

The error rate is not evenly distributed across defect types. Performance varies by category:

- Bridging: 98.6% accuracy. High confidence. Bridging has a distinctive morphology at the feature-scale CDSEM images we use.

- Particle (organic): 96.1% accuracy. Some cross-contamination with nuisance classification on low-contrast images.

- Particle (metal): 97.8% accuracy. Metal particles have high CDSEM contrast that makes them visually distinctive.

- CD deviation: 94.4% accuracy. This is the most error-prone category. CD deviation at ±5nm on a 7nm node can be subtle enough that even expert reviewers disagree.

- Pattern collapse: 98.1% accuracy. Visually dramatic — model performance reflects that.

- Scratch: 97.9% accuracy.

- Edge roughness: 95.2% accuracy. False negatives at low severity levels are the main error mode.

- Unknown/nuisance: 91.3% accuracy. By definition the hardest category — nuisance events are diverse.

The Node Dependency

The 97.3% figure is an average across 14nm, 10nm, and 7nm nodes. Performance varies by node. At 14nm, accuracy is 98.1%. At 7nm, it is 96.4%. The primary reason for the drop is image quality relative to feature size. At 7nm, the features being inspected are at or near the resolution limit of the review SEM. Image contrast decreases, noise increases, and the morphological features that distinguish defect types become less visually distinct. The model is trained on enough 7nm examples that it handles the typical cases well, but its confidence margins are narrower at 7nm than at 14nm.

For customers running processes at 5nm and below, we are explicit that our current model performance at those nodes is approximately 94% and should be treated as a triage tool rather than a definitive classifier. We have an active effort to expand 3nm and 5nm training data through partnerships with EUV-equipped fabs, and we expect to update those model benchmarks in 2026.

Comparison Against Human Expert Review

For context: inter-rater agreement among expert CDSEM reviewers on the same 84,000-image test set is approximately 94.7%. The human experts were three senior process engineers with at least eight years of CDSEM review experience each, working independently without time pressure. Under production conditions — with review throughput pressure and fatigue — human reviewer agreement typically drops to around 88-90% based on our validation data.

The model's 97.3% accuracy exceeds expert consensus agreement (94.7%) on the same image set. That does not mean the model is a replacement for human judgment. It means the model is useful for automated triage — flagging the clear cases and routing the ambiguous ones to human reviewers. The practical benefit is that it filters out approximately 70-75% of review images as clear classifications, allowing engineer time to concentrate on the 25-30% of cases where classification is genuinely uncertain.

The Sampling Rate Caveat

Classification accuracy is only part of the yield analysis picture. A 97.3% accurate classifier applied to a 0.5% review sampling rate will miss most defect events at low to moderate density. The classification model cannot compensate for insufficient sampling. This is a process design question, not a model question — but it affects how much value the classifier actually delivers in practice.

For defect types with high wafer impact (bridging, pattern collapse), most production fabs run review sampling rates between 5% and 20% of detected defect sites, which is enough for the classifier to provide statistically meaningful composition estimates. For lower-impact categories where sampling is at 1-2%, the classifier output should be treated as directional rather than precise. We communicate this directly to customers during onboarding and include sampling rate context in the alerts the system generates.

Model Behavior on Novel Defect Types

One of the questions we get most often is: what happens when a defect type appears that the model has not seen? This is a real concern for process teams introducing new materials or new process steps. The answer is that the model will classify novel defects into the most visually similar existing category, usually with lower confidence scores. Low-confidence classifications are flagged differently in the SynthKernel alert system — they route to human review rather than auto-binning.

The confidence threshold for auto-binning is configurable per fab and per defect category. Customers with more conservative quality requirements typically set the threshold higher, meaning more events route to human review. Customers with well-characterized processes and high review throughput costs often set it lower. The default is calibrated to produce approximately the same false-positive rate as an experienced engineer reviewing under normal conditions — but that default should be validated against the customer's specific process before production deployment.

What Accuracy Does Not Capture

The accuracy metric measures whether the model assigns the right label to each image. It does not measure whether the classification is useful for yield improvement. A model that perfectly classifies every particle defect as "particle" is less useful than a model that also estimates whether the particle is likely to be a yield killer based on its location, size, and composition indicators. That second capability — yield impact scoring — is where we focus development effort beyond baseline accuracy.

Yield impact scoring uses the classification output as input, combined with die map position, process layer, and historical yield correlation data for the same defect type at the same process step. A particle on open field at M1 on a 14nm DRAM process has a very different yield impact than the same-sized particle bridging two critical net connections. The classifier sees them both as "particle (organic)" at 96% confidence. The yield impact score differentiates them.

When to Involve Human Reviewers

Our recommendation: human expert review should focus on three scenarios. First, any classification below 85% confidence — these are genuine hard cases where the model is uncertain and the uncertainty matters for yield decisions. Second, any novel defect type appearing at a new process step or after a recipe change — the model has not seen this variant before, and human review establishes the ground truth that enables retraining. Third, the CD deviation category at sub-7nm nodes — this is where model performance is weakest relative to the yield impact of the defect type, and the combination of high impact and moderate accuracy argues for human verification.

For everything else — high-confidence classifications of well-characterized defect types at qualified nodes — automated binning is both faster and more consistent than human review under production conditions. The goal is not to eliminate human judgment; it is to ensure that human judgment is applied where it adds the most value, rather than being spread thin across thousands of routine review events per day.