Rule-based bins are fast to set up and easy to audit. AI classifiers are more accurate and handle new defect types without reconfiguration. Neither is universally better. The right choice depends on your process stability, your team's capacity for model validation, and what you need to do with the classification output. Both approaches have failure modes that the sales pitches for each rarely mention. This post covers both.

How Rule-Based Bins Work

Rule-based defect classification on optical and SEM inspection tools works by defining thresholds on measured defect attributes: size, shape metrics (aspect ratio, circularity, roughness of boundary), gray-level statistics from the inspection image, and sometimes location relative to design features. The tool applies these rules to each detected event and assigns a bin code. Rules are defined by process engineers in the tool's recipe setup interface and can be adjusted without any software development.

The principal advantage is auditability. The classification rule for "particle (large)" is something like: size > 0.3 microns AND circularity > 0.7 AND gray-level contrast > 0.4. You can state the rule in a sentence, verify it against any classified image, and modify it directly if it produces wrong classifications. This matters for process engineers who need to explain to management why a specific lot was held or dispositioned — the rule-based classification provides a transparent decision trail.

Where Rule-Based Bins Break Down

Rule-based bins fail predictably in three situations. First, when the process changes: a new process layer, a new material, or a new equipment recipe often introduces defect types or changes the morphology of existing defect types enough that the existing rules classify them incorrectly. Updating rules requires manual effort from a process engineer who has reviewed enough examples of the new defect type to define accurate thresholds. In a fast-moving process development environment, the rules fall behind.

Second, when defect morphology is continuous rather than discrete: the boundary between "particle (metal)" and "particle (organic)" in electron microscopy images is often gradual, not sharp. Rules that work on the clear cases (definitely metal, definitely organic) will misclassify the intermediate cases, and those intermediate cases may be exactly the ones that correlate with yield events. A bimodal size distribution of a defect type that straddles a size-based bin boundary will be split between two bins incorrectly.

Third, at advanced nodes where image quality decreases relative to feature size: rule-based thresholds that work well at 28nm often fail at 7nm because the image signal-to-noise ratio decreases, the size measurements become less reliable at small defect sizes, and the morphological features that distinguish defect types are less visually distinct in noisier images. The same rules that achieve 95% accuracy at 28nm may drop to 80-85% accuracy at 7nm without any change in the rule definition.

How AI Classification Works at the Image Level

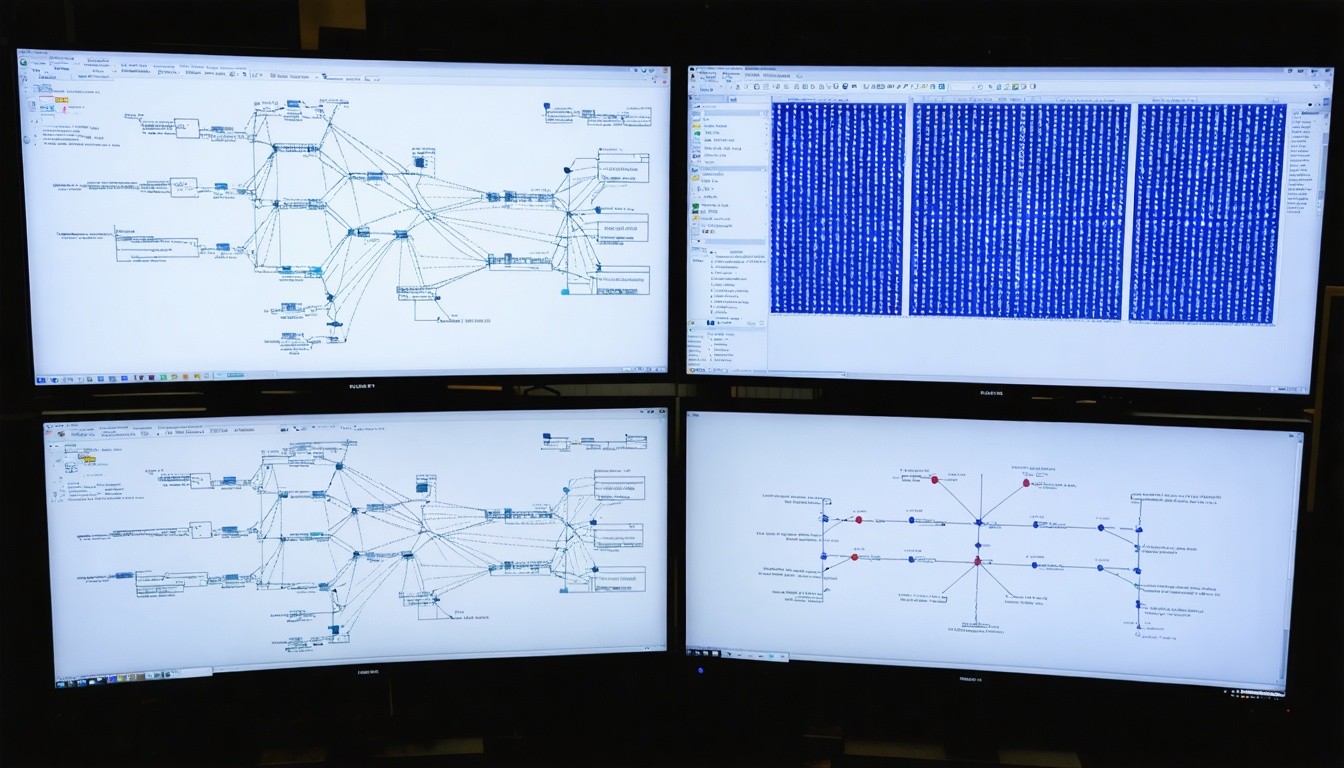

AI-based defect classification uses convolutional neural networks trained directly on labeled CDSEM images. Instead of extracting hand-defined features (size, circularity) and applying threshold rules, the model learns to extract relevant features from the raw image during training. The learned features often correspond to morphological characteristics that are too subtle or too complex to express as simple threshold rules — texture gradients, local context around the defect, relationships between defect shape and surrounding feature geometry.

This approach produces higher accuracy on well-represented defect types because the model can use the full information content of the image rather than the small set of metrics that rule-based thresholds rely on. The 97.3% top-1 accuracy we report for SynthKernel's classifier on 14nm-7nm CDSEM images is measurably higher than the 88-91% accuracy range we observe for well-configured rule-based bins on the same image set. The gap is largest for defect types with subtle morphological distinctions — CD deviation versus edge roughness, for example — where visual discrimination depends on context rather than simple size and shape metrics.

Where AI Classification Falls Short

AI classifiers fail in situations that differ from the training distribution. A model trained on 14nm logic inspection images performs worse on 28nm DRAM images, on GaN images, or on images from a new inspection tool with different image characteristics. This is not a theoretical concern — it is the dominant failure mode in practice. Every time a process changes significantly, or a new tool is installed, the model needs validation on the new distribution and potentially retraining.

Retraining requires labeled examples of the new defect types or image conditions. Building a labeled training set requires expert reviewers to label images — which takes time, requires access to experts with the right process knowledge, and introduces labeling variability when different experts disagree on borderline cases. For a process team that changes recipes frequently and introduces new materials regularly, the overhead of maintaining AI model quality can approach the overhead of maintaining rule-based bins, negating the automation benefit.

Auditability is the second weakness. When an AI model classifies a defect and the classification drives a lot hold or a root cause conclusion, process engineers and managers often want to understand why. "The model assigned 94% confidence to the bridging class based on learned image features" is less satisfying than "the defect has a width greater than 0.2 microns spanning two metal lines and a circularity less than 0.4." Explainability tools for convolutional models (attention maps, Grad-CAM visualizations) help but do not fully substitute for rule transparency in a regulated or audited process environment.

The Hybrid Approach That Works Best in Practice

The most effective deployment we have seen is a hybrid where rule-based bins handle the clear, high-volume cases, and AI classification handles the ambiguous cases and new defect types. The separation is made by confidence: high-confidence rule-based classifications are accepted directly; classifications below a confidence threshold (or falling into a catch-all nuisance bin) are passed to the AI classifier for refinement. The AI classifier is also applied to new defect types that arrive without established rules.

This hybrid approach gets the auditability of rules for the common cases (where rules work well and the classification decision is defensible) and the flexibility of AI for the edge cases and evolving process conditions. It also allows incremental AI adoption: a team that is not ready to commit to full AI classification can start by applying AI only to the nuisance bin, where the rule-based system already acknowledges it cannot classify, and evaluate the improvement in classification quality there before expanding to other bins.

Validation Requirements for Each Approach

Rule-based bins require validation when rules are first established and when process conditions change. Validation involves reviewing a sample of classified events with an expert reviewer and confirming that the rule assignments match expert judgment. The sample size needed for statistically meaningful validation depends on the classification categories and their relative frequencies — typically 200-500 images per defect class per inspection step is sufficient for a 95% confidence interval within ±3% on classification accuracy.

AI classifiers require the same validation on the same schedule, plus additional monitoring for distribution shift: tracking confidence score distributions over time to detect when the production image distribution is drifting away from the training distribution. A well-calibrated AI classifier will show decreasing average confidence scores and increasing nuisance-bin fractions when it encounters images it was not well trained on. Monitoring these metrics provides early warning that model performance may be degrading, prompting targeted validation before the degradation affects production decisions.

The Decision That Matters Most

The choice between rule-based and AI classification is secondary to the question of what you do with the classification output. A perfectly accurate classifier that feeds into a dashboard that no engineer looks at generates no value. A moderately accurate rule-based system that feeds directly into the excursion response workflow, triggers the right alerts, and routes events to the right engineering teams generates substantial value. Classification accuracy matters, but it matters in proportion to how directly the classification output connects to yield improvement actions. Build the action pathway first; then optimize the classifier.